By 2026, data centres power consumption has reached a critical portion of global energy supply, while the tech giants are lining up to purchase nuclear power plants. But is the market correctly pricing in the physical limitations of the grid?

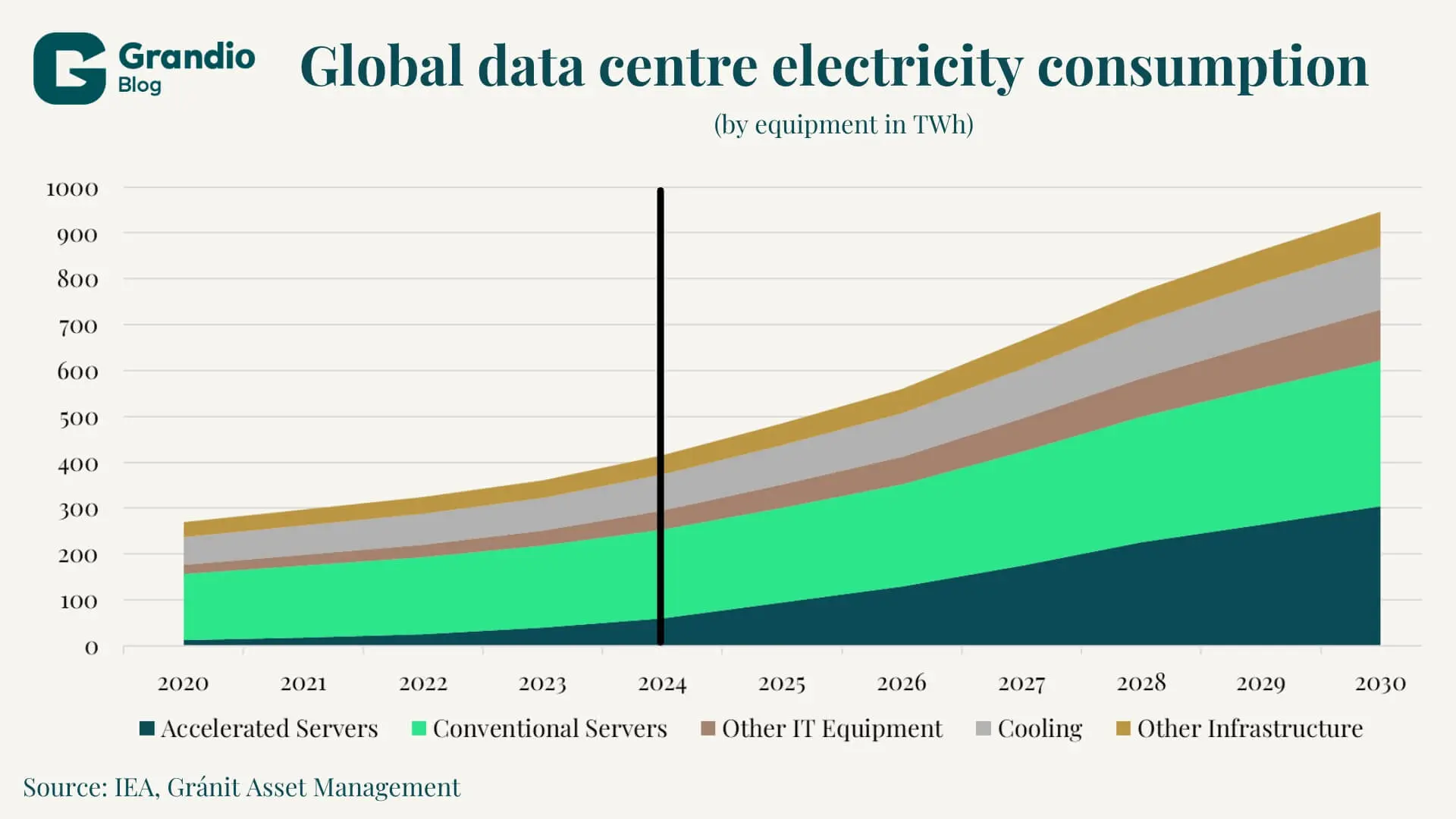

The rapid advancement of artificial intelligence solutions a profound effect on global infrastructure as well. The energy needed to run these solutions (which are usually some form of LLM-like architecture) increased from negligible to systematically relevant by the end of 2026. According to the investigation of the IEA in 2024 data centre power usage reached 400 TWH, this is roughly equivalent to the consumption of France or 6.5 times the consumption of Hungary in the same year.

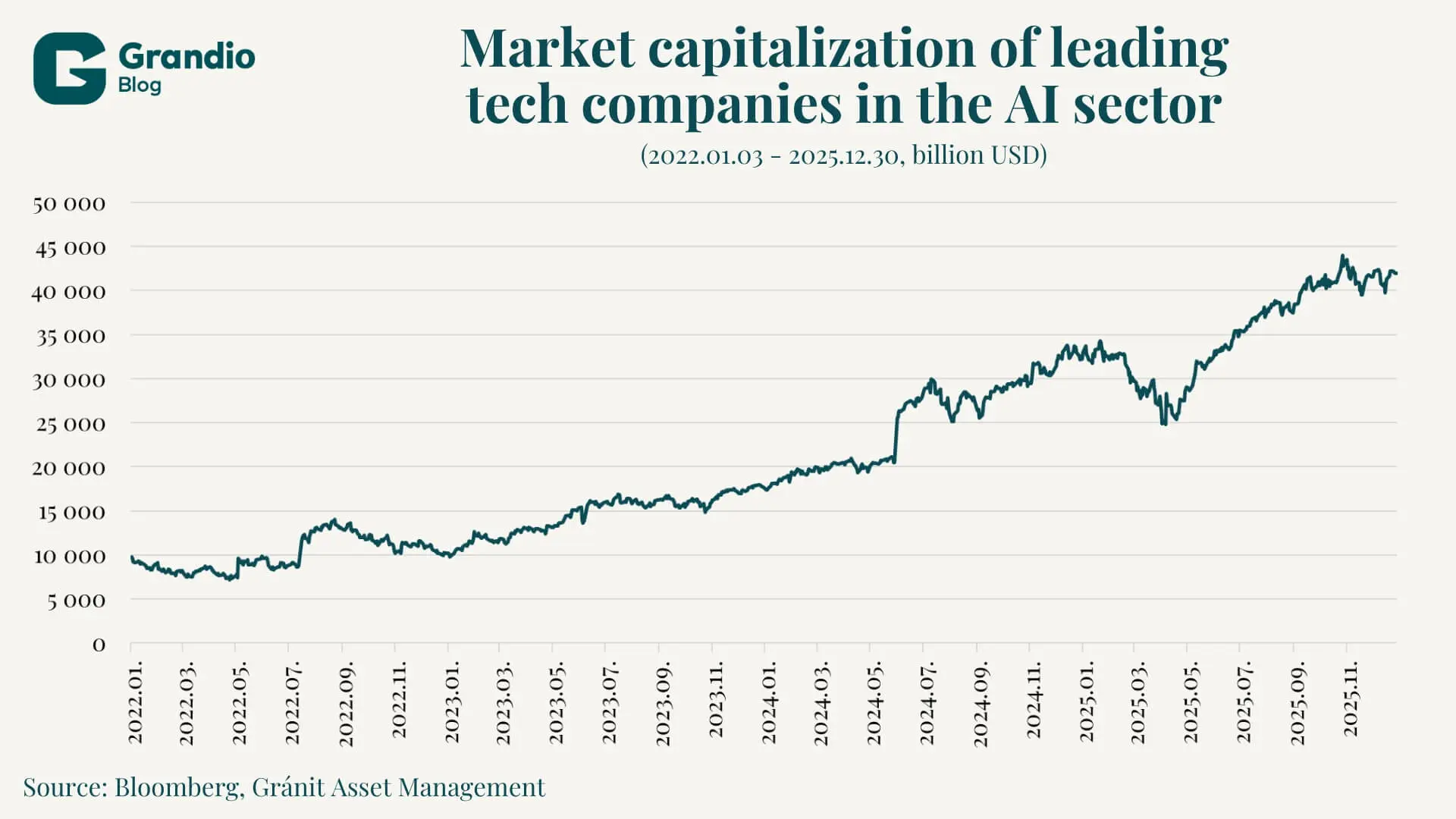

At the same time, the market capitalization of leading tech companies in the AI sector (Microsoft, Alphabet, Meta, Amazon, Oracle, Nvidia, AMD, Broadcom, Micron, Salesforce, Tesla, Uber) has roughly quadrupled, reflecting investors’ confidence in the sector’s growth. The widely held assumption that the energy supply will expand organically to meet computing demands is fundamentally flawed. Data centres require vast amounts of land, water, and—more importantly—an uninterrupted power supply available 24 hours a day, seven days a week. As a result, a structural shift is underway in the global economy: tech giants are aggressively acquiring old nuclear power plants, financing advanced small modular reactors (SMRs), and bypassing traditional utility frameworks, thereby triggering a new commodities Supercycle for critical materials such as copper and uranium. All of this suggests that the ultimate trajectory of artificial intelligence is determined not only by technological progress, but also by the physical, geopolitical, and regulatory realities of the energy sector.

The illusion of infinite energy: exponential vs. logistic growth

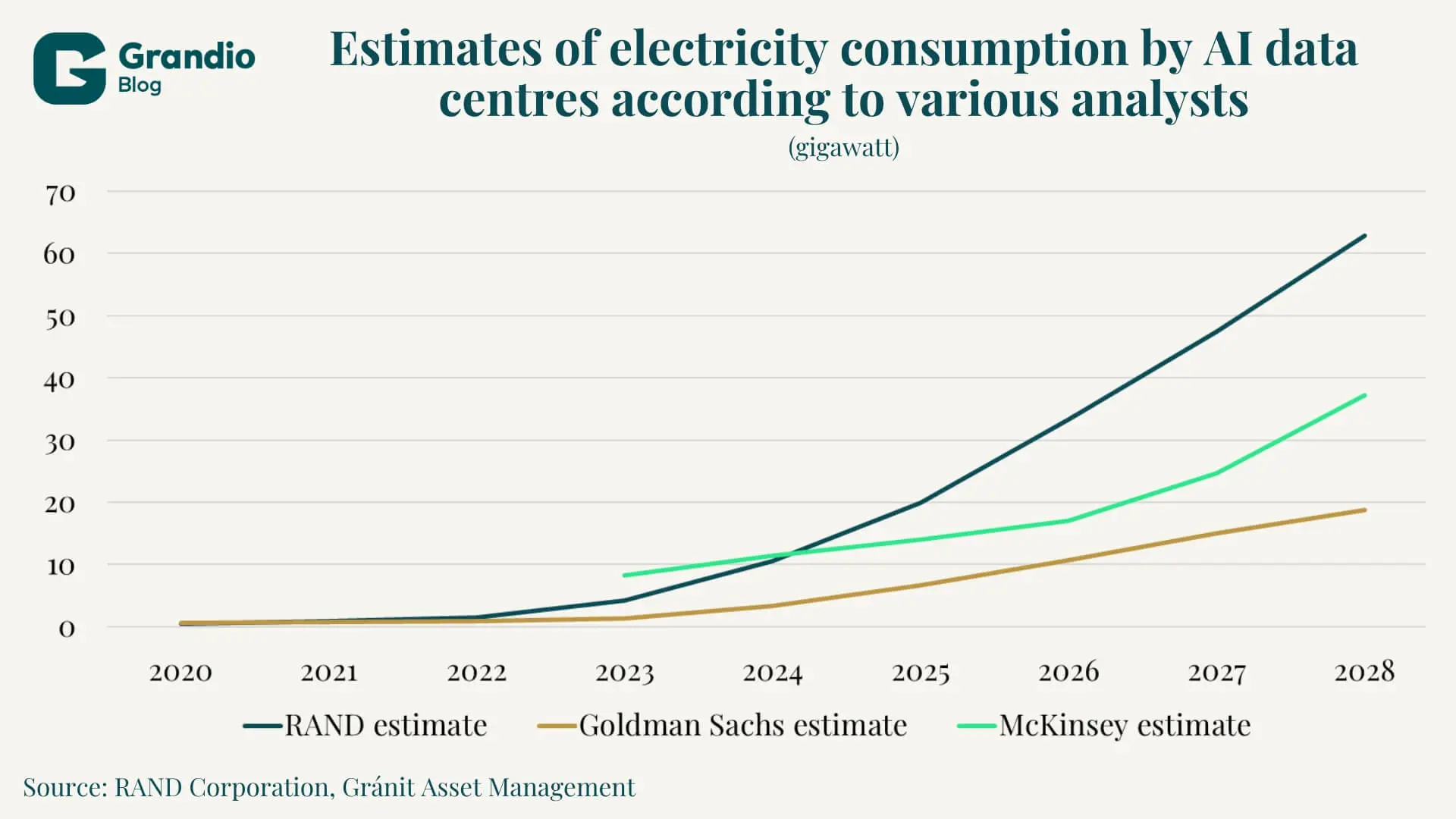

Industry analysts typically rely on exponential models when forecasting the capabilities of artificial intelligence and the associated energy demands. These projections suggest that computational demands—and thus energy consumption—will double at regular, increasingly shorter intervals. For example, according to an extrapolation by the RAND Corporation, the energy demand of global artificial intelligence data centres could require an additional 10 gigawatts (GW) of capacity by 2025, reach 68 GW by 2027, and rise to a staggering 327 GW by 2030. This would mean that the power demand of these centres in 2027 would equal the total generation capacity of the state of California in 2022, and by 2030, it could even match the generation of many developed countries.

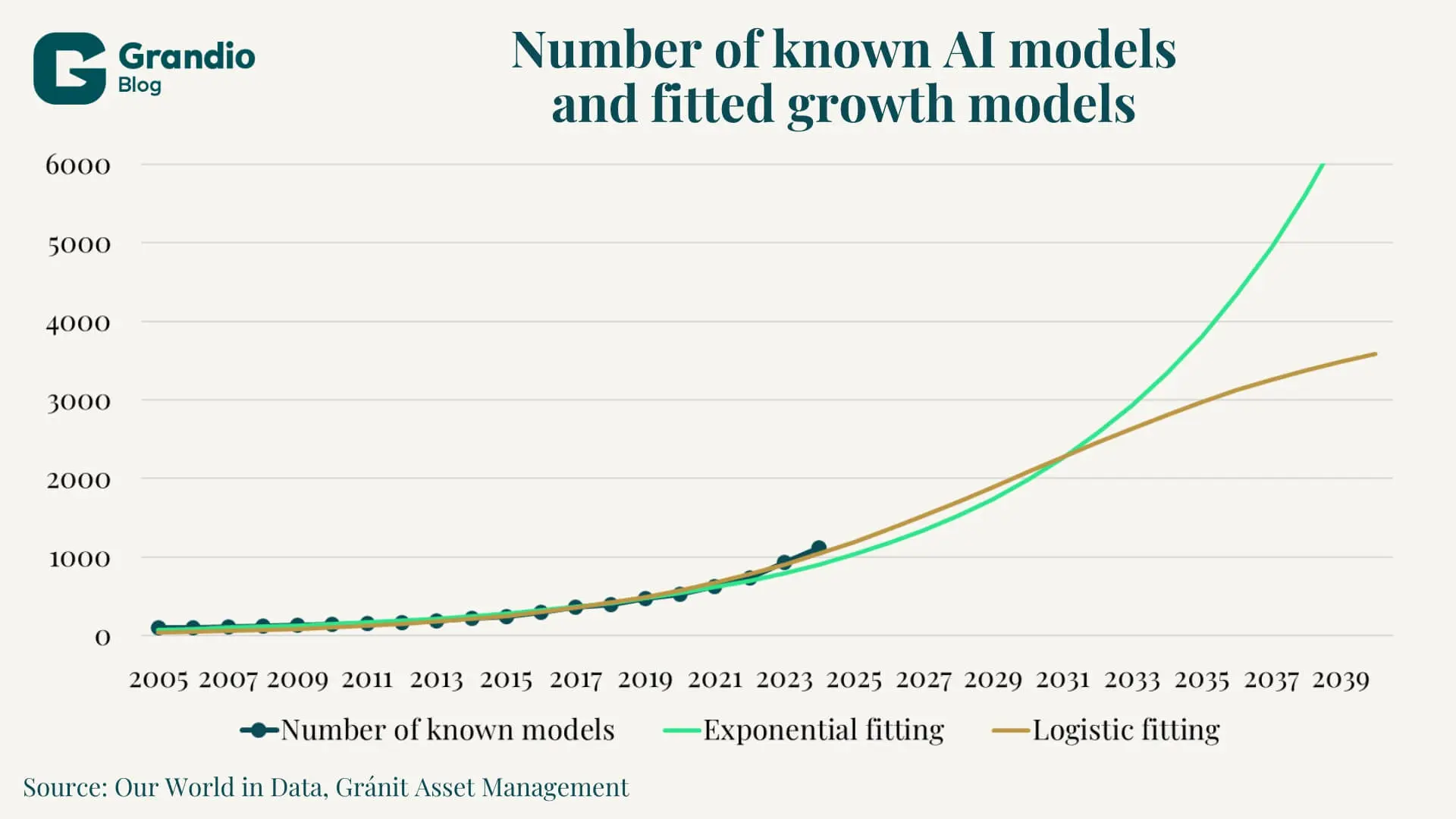

However, applying unbounded exponential models to physical systems can lead to serious errors. Resource-constrained systems are generally better described by logistic growth, whose curve more closely resembles an “S” shape that levels off over time. Similar behaviour is generally observed during the introduction of a new technology, most recently, for example, with the spread of the internet. In the case of logistic growth, growth appears exponential at the beginning of the curve; however, after a certain point, the rate of growth begins to decline as fewer and fewer unmet needs remain in the market. There is no adequate data available on the use and efficiency of various AI models, but it is clear that energy consumption is definitely proportional to computational demand.

Figure 4 clearly illustrates the similarity between the two models. Since we are in the early stages of technological development, both the exponential and logistic fits can yield reasonable results; however, we already observed a slight slowdown in 2023–2024, which may be an early sign of logistic behaviour.

A common counterargument against energy-constrained development—one that companies are quick to invoke—is that future hardware and software technologies will be significantly more energy-efficient, thereby flattening the demand curve. However, the Jevons paradox better describes AI’s energy consumption. According to this principle, formulated during the 19th-century Industrial Revolution, as technological progress makes the use of a given resource more efficient, the total consumption of that resource does not decrease but increases. In the case of artificial intelligence, this means that as running models becomes cheaper, they are applied in more and more places. In other words, the argument that increased efficiency alone allows for unlimited growth in usage is not convincing: sooner or later, demand will run up against physical limits.

The Jevons Paradox and the Limits of Efficiency

In the short term, we can expect a very rapid increase in energy consumption under both growth scenarios. According to IEA estimates, energy demand could nearly double between 2024 and 2030. This trend poses significant challenges primarily in the United States, particularly in Northern Virginia, where the majority of data centres—accounting for approximately 70 percent of global traffic—are concentrated. According to analyses by PJM Interconnection, the local grid operator, and Dominion Energy, local data centre energy demand could reach 15–20 GW by the 2030s.

System-wide pressure on the PJM grid had a significant upward effect on prices. Prices in the Forward Capacity Auction—which aims to ensure that current generation capacity meets demand—increased tenfold. Prices rose from $28.92/MW-day in the 2024/25 delivery year to $329.17/MW-day for the 2026/27 delivery year, clearly illustrating the extent of the capacity shortfall. According to PJM’s warning, there could be a shortage of up to 60 GW of capacity in the coming decade if no major infrastructure development takes place.

The two constraints: copper and uranium

At the same time, the physical infrastructure development required for AI advancement is fundamentally resource-intensive, which, according to macroeconomic analysts, could trigger a new commodities Supercycle. The seven largest tech companies are spending more than $680 billion on AI-related developments. These investments are having the greatest impact on the use of two raw materials: copper and uranium.

Copper is indispensable to the global economy due to its excellent electric and thermal conductivity. In AI data centres, copper is a key component of virtually every piece of equipment. According to JPMorgan research, every single MW of data centre capacity requires 20–40 tons of copper, meaning that a 500 MW centre alone could require 20,000 tons of raw material, significantly increasing demand for copper. Taking into account the previous, very conservative Goldman Sachs estimate, this could mean at least 1 million tons of additional demand between 2025 and 2028.

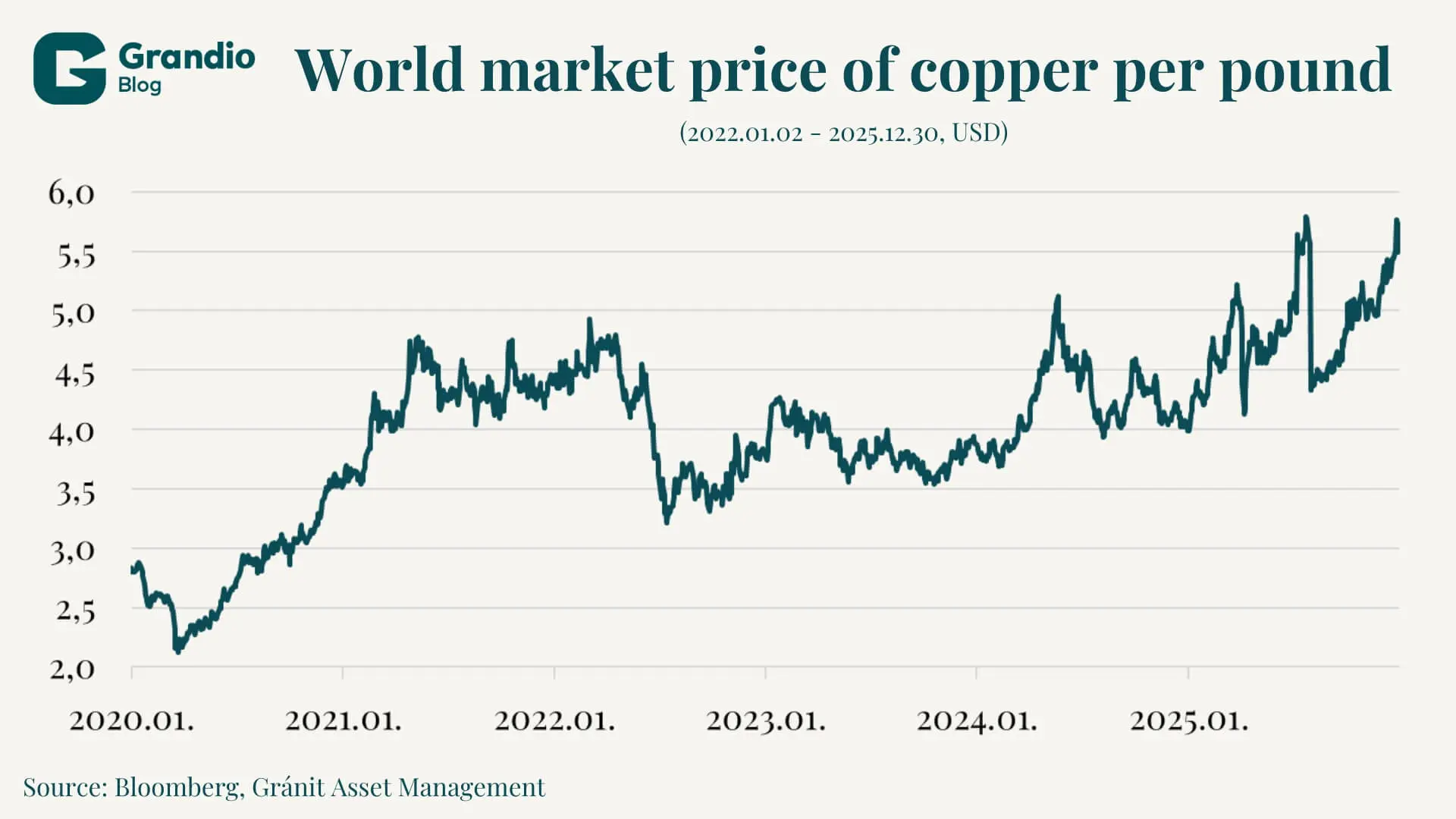

This excess demand places further pressure on the mining industry, which must also meet the additional demand driven by widespread electrification, the green transition, electric vehicle manufacturing, and ongoing infrastructure developments. Additional challenges include the declining ore grades in currently operating mines, the lack of significant new discoveries, and the long investment horizon, which currently stands at approximately 17 years from discovery to production. These conditions have driven the global market price of copper to historic highs, a trend that is likely to continue due to rising demand.

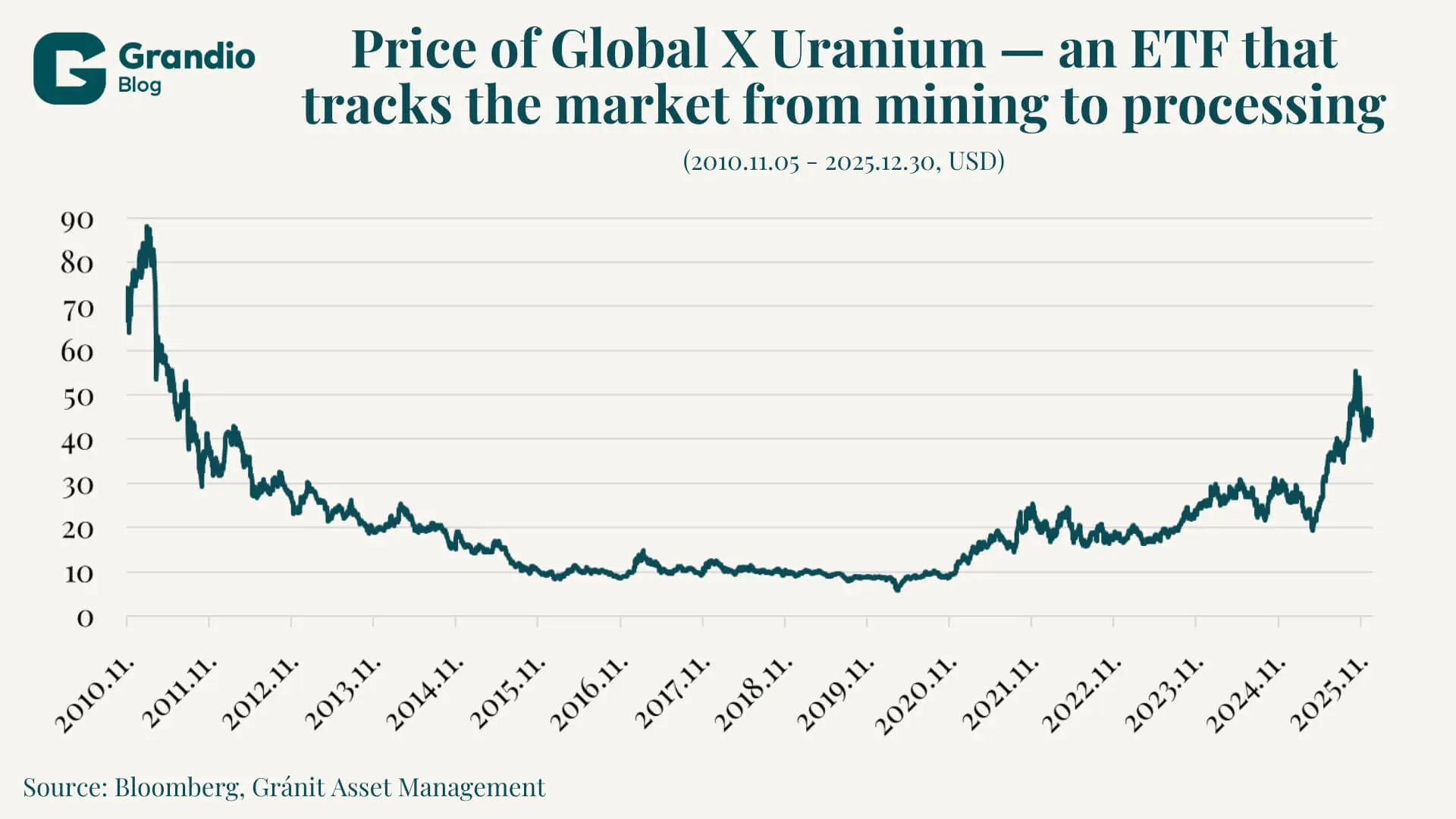

Unlike copper, uranium has become the focus of attention in the context of powering infrastructure. Data centres require a continuous, stable, and high-volume power supply. Meeting these requirements—along with carbon neutrality commitments—can be achieved cost-effectively through nuclear energy. This has brought about fundamental shifts in the uranium market. Following the Fukushima disaster, the industry has been on a fundamentally downward trajectory, resulting in low investment and limited development. Responding to sudden spikes in demand is particularly difficult, as the sector is highly centralized: 40 percent of the world’s raw uranium is mined in Kazakhstan, while about half of the enrichment takes place in Russia.

Recognizing these circumstances, tech companies with an interest in AI have embarked on a sort of nuclear renaissance. Without waiting for the energy sector’s response, they have made investments in the energy sector on an unprecedented scale. Microsoft signed a 20-year agreement with Constellation Energy to purchase the entire output of the Crane Clean Energy Centre. This marks the planned 2028 reopening of a recently closed nuclear power plant. In 2024, Amazon Web Services purchased Talen Energy’s 960 MW Cumulus data centre for $650 million, and in the summer of 2025, the collaboration between the two companies was expanded to cover 1,920 MW of capacity. At the same time, Google, with the help of Kairos Power, plans to address its energy supply challenges through multiple SMRs by 2035.

Changing investment priorities

In the first half of the decade, AI-related investments were concentrated in the semiconductor and software sectors. On the capital markets, many companies saw their valuations surge, driven by the idea of unlimited growth. As the industry matures, however, the physical limits of growth are becoming increasingly important, and the roles of the energy and mining sectors are also growing. Companies that can reliably supply data centres with electricity are receiving significant orders; these investments have substantially increased their value. At the same time, the nuclear industry, which had been stagnating for a decade, has also begun to rebound.

The prevailing narrative—that artificial intelligence is purely a cloud-based product with no physical footprint—masks the reality of the sector. Forecasts based on the exponential growth of AI capabilities and developments must also factor in energy and raw material market constraints, the long lead times of copper mining, and infrastructure deficiencies. The aggressive, unprecedented moves by tech giants to secure nuclear facilities and pioneer SMR technologies confirm that the tech sector has identified energy as one of the ultimate determinants of future market dominance.

As the industry shifts from sporadic research and development and model training toward widespread, continuous use, the pace of technological innovation will increasingly be dictated by the limitations of the power grid. The next era of investment in the sector will be defined by physical infrastructure: copper mining for data centres, uranium enrichment, and grid expansion.

The author of this article is Máté Sándor Fonyi, a student at Mathias Corvinus Collegium (MCC).

Legal Disclaimer

This document has been prepared by Gránit Alapkezelő Zrt. (registered office: 1134 Budapest, Váci út 17; company registration number: 01-10-046307) for marketing and informational purposes. Accordingly, it has not been produced in accordance with legal requirements designed to promote the independence of investment research. Nor is it subject to any prohibition on dealing ahead of the dissemination of investment research. This document does not constitute investment research or investment advice. Any data presented refers to past performance, and past performance is not a reliable indicator of future results. Each investor must make investment decisions at their own discretion and responsibility.