Many people treat the AI boom only as a chip story, but in reality, the binding constraints are often electricity, grid connection, cooling, and the right location. The current wave of data center construction is therefore not only about technology but also about energy and infrastructure. The most extreme version of this logic is the idea of launching data centers into space – an idea that has recently resurfaced in connection with Elon Musk and SpaceX. How much would this cost, and would it be worth it?

Over the last two years, the debate around AI has often sounded as if everything depends on microchips. The discussion has been about whether we have enough GPUs, enough memory, and enough manufacturing capacity, and all of this obviously matters. Still, in the background, a less visible but much stronger bottleneck has formed: power and infrastructure.

A modern data center is no longer a traditional IT project housed in a local server room, but more like an industrial facility that trains and runs models across massive server parks. This requires a considerable amount of electricity, a stable grid connection, and robust cooling. In many cases, that also means significant water use – plus a location where all of this is politically feasible and the permits are attainable. When all of these constraints tighten at the same time, strange ideas start to appear – and this is exactly where the space-based data center concept comes in, an idea that has resurfaced around Musk and SpaceX in recent weeks.

The question is not whether it is physically possible to put servers into orbit; in theory, it is. However, at an industrial scale, it is not realistic today. The real question is whether this could be a solution to current energy constraints, and whether it could ever be a smart and profitable investment.

Chips are only the entry ticket – now the constraint is increasingly electricity

There is a simple but important relationship here: scaling compute capacity in practice means scaling electricity demand. According to the International Energy Agency’s recent comprehensive analysis, global data center electricity consumption in 2024 was roughly 415 TWh, about 1.5 percent of total global electricity consumption. In the IEA baseline scenario, it could rise to around 945 TWh by 2030 – more than doubling and pushing the share closer to 3 percent of global consumption.

The key point is not only the size of the global total, but the question of where exactly this demand shows up, because the growth is not evenly distributed; it concentrates into clusters. Where the grid is already stressed, a data center is not simply one more consumer; it becomes a system-level issue that can create real operational and political problems. The IEA also draws attention to the fact that grid constraints and backlogs in connection requests can delay planned data center projects. It highlights that lead times for key grid components – primarily transformers and high-voltage cables – have clearly increased in recent years.

This is where the paradox comes in, and many still underestimate it because people expect infrastructure to be built at the speed of software scaling. But grids and physical hardware do not work like software; you cannot copy and distribute them instantly or infinitely. Grid expansion requires materials, permits, and often public procurement, and it unfolds on multi-year timelines – often closer to 5 to 10 years.

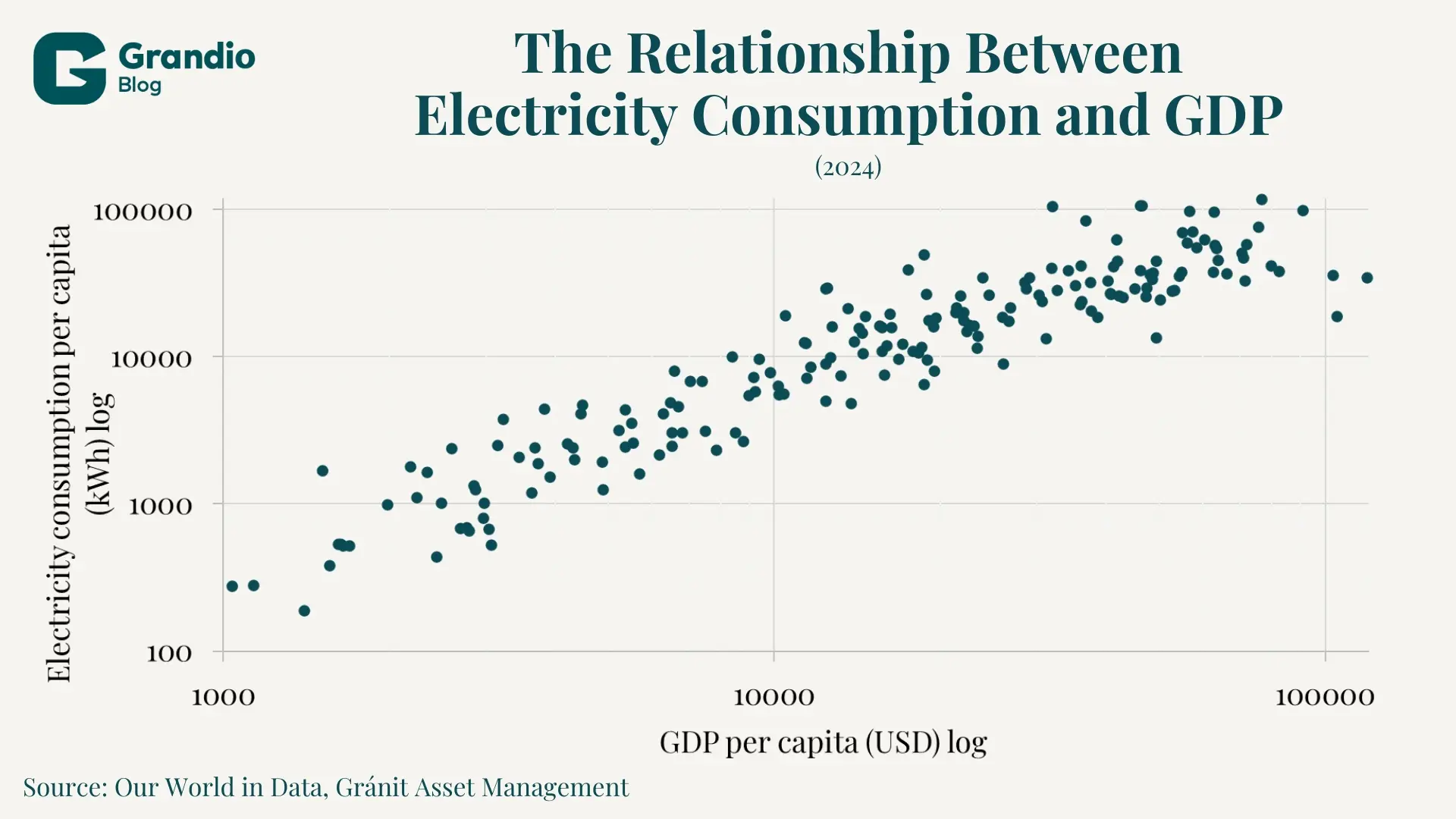

It is also important to see that energy is not some secondary variable; it is a core input for almost every economic activity. International data shows that electricity consumption and GDP per capita move closely together, and the pattern makes one thing very clear: there is no high-income economy with persistently low energy use, and historically, it is hard to reach and sustain high-income levels without reliable and abundant electricity. This is why a sudden rise in electricity demand driven by AI is not only a technology question; it is also a growth question. Development requires more energy, and it is not enough to invest directly into growth without investing in the background infrastructure that produces and delivers that energy.

Data centers as industrial facilities: power, cooling, water, connection

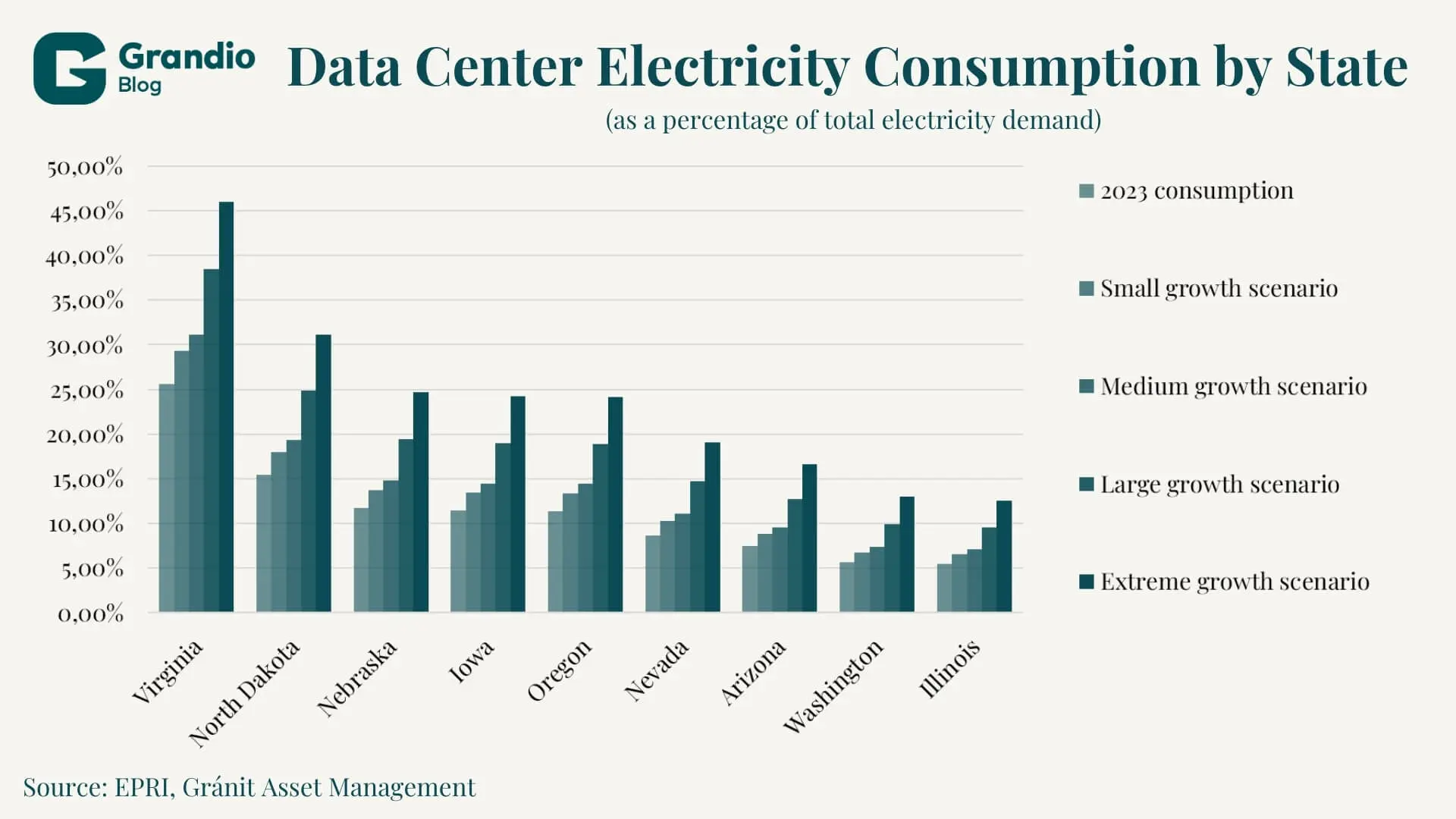

The United States shows this reality very clearly. A Pew Research Center summary reports that in Virginia, in 2023, data centers accounted for roughly 26 percent of the state’s total electricity demand, and several other states also show double-digit shares.

These kinds of concentrations matter because the question is no longer whether a country has enough electricity overall, but whether a given region has the production and connection capacity – meaning whether the necessary power can be produced and actually delivered to the site. Large AI-focused data centers are often planned to consume hundreds of megawatts, which can be comparable to the load of a smaller city. This forces the surrounding infrastructure to be planned and upgraded accordingly.

Cooling is also not a side issue, because cooling itself can require large amounts of energy – and often significant water use as well. Heat must be removed, and local conditions determine which technology is feasible and what level of social conflict it can cause. This is the point where a data center project turns into an issue of local politics, water management, and grid development.

Why location is critical and why space-based data centers are tempting

That is why, when you want to place a data center, the decision quickly collapses into a few basic questions:

- Does the location have enough power at a reasonable price?

- Does the project have a realistic connection timeline to the grid?

- Does the project have a workable and affordable cooling solution?

- Can the project secure the necessary permits and local political support?

If any of these fail, the project is delayed, gets more expensive, or stalls and is forced to search for a new location. In Europe, this is becoming increasingly visible, since the European Commission is already treating data center energy demand as a broader energy policy issue, and it also points to significant growth expected in the EU by 2030.

We can see that we are operating under intense scarcity. Solutions are time-consuming, while demand keeps growing and growth is accelerating. In this environment, it is understandable why the space idea appears as a potential answer. In theory, in orbit there is effectively unlimited room to build, there are no neighbors complaining about noise, and solar power can be more continuous due to near-constant exposure compared to many terrestrial locations. Reuters reported that SpaceX presented U.S. regulators with a system that could become an orbital data center network of up to one million satellites powered by solar energy in Earth orbit.

At the same time, reactions from other tech leaders have also emerged. Business Insider reported that Sam Altman said at an event in New Delhi that, under today’s technology and financing conditions, this idea is not realistic, and that it will not matter at scale in this decade. This is not only something we should view as part of their long-running personal conflict; it also shows what the mainstream view on this topic looks like right now. Space-based data centers may become workable in the long run, but they are not the near-term solution.

Why space does not solve the energy and cooling problem quickly

When people hear “space,” many instinctively say it is cold there, so cooling must be easy. However, there is a twist in the physics: in a vacuum, there is no air and therefore no convection, so heat cannot be removed the way it is on Earth with flowing air or liquid in the surrounding environment. That means heat rejection is mainly a radiation problem, which requires a large surface area. At data-center scale, this implies that a huge radiator surface would have to be placed in orbit. While you can debate exact numbers, the core point remains: in space, rejecting heat by radiation is surface-area intensive. More surface area means more mass, and that mass must be launched into orbit. Launching mass into space is really expensive, which works directly against cost-effectiveness.

The second problem is maintenance and repairs. On Earth, data centers are serviced continuously and failed components are replaced immediately. In space, this becomes a far more difficult logistical challenge, and on top of that, you face radiation and reliability issues. What counts as top-tier technology on Earth may not function in the same way in orbit, because it was not designed for those conditions. If it fails, you may need entirely new designs.

Finally, if you were to deploy massive compute capacity in space – like the one-million-satellite concept – then orbital management, collision-risk monitoring, insurance, and regulation become part of the core system, and those are substantial and costly challenges to solve.

There are still legitimate use cases for space-based computing – for example, when satellites process part of their observation data in orbit and downlink only the essential results – but this is still very far from placing a full-scale data center in space.

The real story is decided on Earth

The space data center idea is catchy, visually powerful, and feels like science fiction. But it is clear that this is not the most important direction right now. The important part is what is happening on Earth, because AI infrastructure is already a major – and expanding – story in energy and real estate.

The IEA also notes that global data center investment reached roughly $500 billion in 2024, and that growth accelerated sharply over the last two years. If we take this seriously, then the winners in AI will not only be chip makers and AI model developers, but also those who can secure the right locations, grid connections, electrical and cooling equipment, affordable power, and the social acceptance that makes projects possible.

This is why real estate matters, especially land, buildings, contracts, and the precise location. Infrastructure matters just as much, including proximity to substations, transformers, redundancy, grid fees, regulation, and the time required to get connected.

This is also where investor thinking can connect in a practical way, because the question becomes extremely grounded: where can you find land and grid capacity that can be monetized quickly, and what are the exact bottlenecks that will reprice certain locations, operators, and services?

Five indicators worth tracking in the data center boom

The visible part of a data center is hardware, but risks and returns are often decided elsewhere. The following five indicators help you quickly judge whether a project is viable and whether a region can remain competitive.

- Contracted capacity in megawatts and the real connection timeline

What matters is not when the building is finished, but when it receives power and has a guaranteed grid supply – because a completed facility without electricity is not a functioning facility. Processing connection requests and network upgrades is slow, and this is now a more significant risk factor than construction itself. - Electricity cost and the hedging structure

Total cost is not only the current market price; it also depends on how much supply is fixed long-term through mechanisms like PPAs, whether there is on-site generation or storage, and how exposed the project is to spot prices and grid fees. The same facility looks very different under stable energy prices than under volatility. - Power Usage Effectiveness and efficiency

Power Usage Effectiveness (PUE) shows how much energy goes to operations beyond IT load, mainly for cooling and losses. Efficiency improvements can reduce the cost of computing, but local conditions such as climate, technology choices, water access, and regulations matter – so the same target value is not realistic everywhere. - Cooling strategy, water exposure, and permitting risk

Cooling technology and water demand create permitting risk and can undermine social acceptance. In water-stressed regions or dense areas, cooling and water use can quickly become a local conflict, causing delays, extra costs, capacity limits, and, in some cases, even relocation. - Capital Expenditure per megawatt and lead times for critical equipment

CAPEX per contracted megawatt makes projects comparable. The building is not the only expensive part, since electrical infrastructure such as substations, transformers, switchgear, cooling, and redundancy often account for a large share of total CAPEX. Long lead times for critical equipment can also delay commissioning and therefore push out revenue.

Space is a thought experiment, not a near-term solution

Space-based data centers are a useful thought experiment because they highlight the core constraint. The limits to AI adoption are often not only algorithms or chips, but also electricity price and availability, grid capacity, cooling options and costs, permits, and – often – the social acceptance of large clusters.

The global numbers suggest that the pressure is already here and moving fast, since going from roughly 415 TWh in 2024 to around 945 TWh by 2030 means more than doubling in six years. If this trajectory continues, one of the biggest lessons of the next few years will be that the AI race will be partly decided by grids and location choices. The winners will be the places where electricity, connection, and infrastructure are available at reasonable cost and with social acceptance.

The author of this article is Marcell Kovács, a student at Mathias Corvinus Collegium.

Legal Disclaimer

This document has been prepared by Gránit Alapkezelő Zrt. (registered office: 1134 Budapest, Váci út 17; company registration number: 01-10-046307) for marketing and informational purposes. Accordingly, it has not been produced in accordance with legal requirements designed to promote the independence of investment research. Nor is it subject to any prohibition on dealing ahead of the dissemination of investment research. This document does not constitute investment research or investment advice. Any data presented refers to past performance, and past performance is not a reliable indicator of future results. Each investor must make investment decisions at their own discretion and responsibility.